Changing Clothes and Background in Photos using Stable Diffusion Inpainting

Explore the transformative impact of Stable Diffusion on photo editing, simplifying background replacement and wardrobe changes. In this blog post, we delve into the world of inpainting techniques with Stable Diffusion, to see how they make this possible.

In this blog post, we will explore the innovative realm of AI-powered photo editing. We'll guide you through the exciting techniques of changing backgrounds and swapping out clothes using advanced inpainting methods with Stable Diffusion XL (SDXL), transforming these daunting tasks into manageable, creative opportunities.

Stable Diffusion Inpainting Models

Before we dive deeper, let's very briefly look at the inpainting techniques we will be exploring in this blog post and how they work.

SDXL Inpainting is a text-to-image diffusion model that generates photorealistic images from textual input. It allows for precise modifications of images through the use of a mask, enabling the alteration of specific parts of an image. The process involves using a mask to identify the sections of the image that need changing, followed by creating synthetic masks to guide the inpainting process, ensuring accurate generation of photorealistic images from textual input. ControlNet conditioning can also be used in conjunction with SDXL Inpainting to provide additional control and guidance in the image generation process, allowing for more flexibility and customization of the generated images.

Background Replacement using Stable Diffusion

If you are a professional photographer or represent an agency specializing in professional photoshoots for models or brands, conducting photoshoots in different locations can be both time-consuming and expensive.

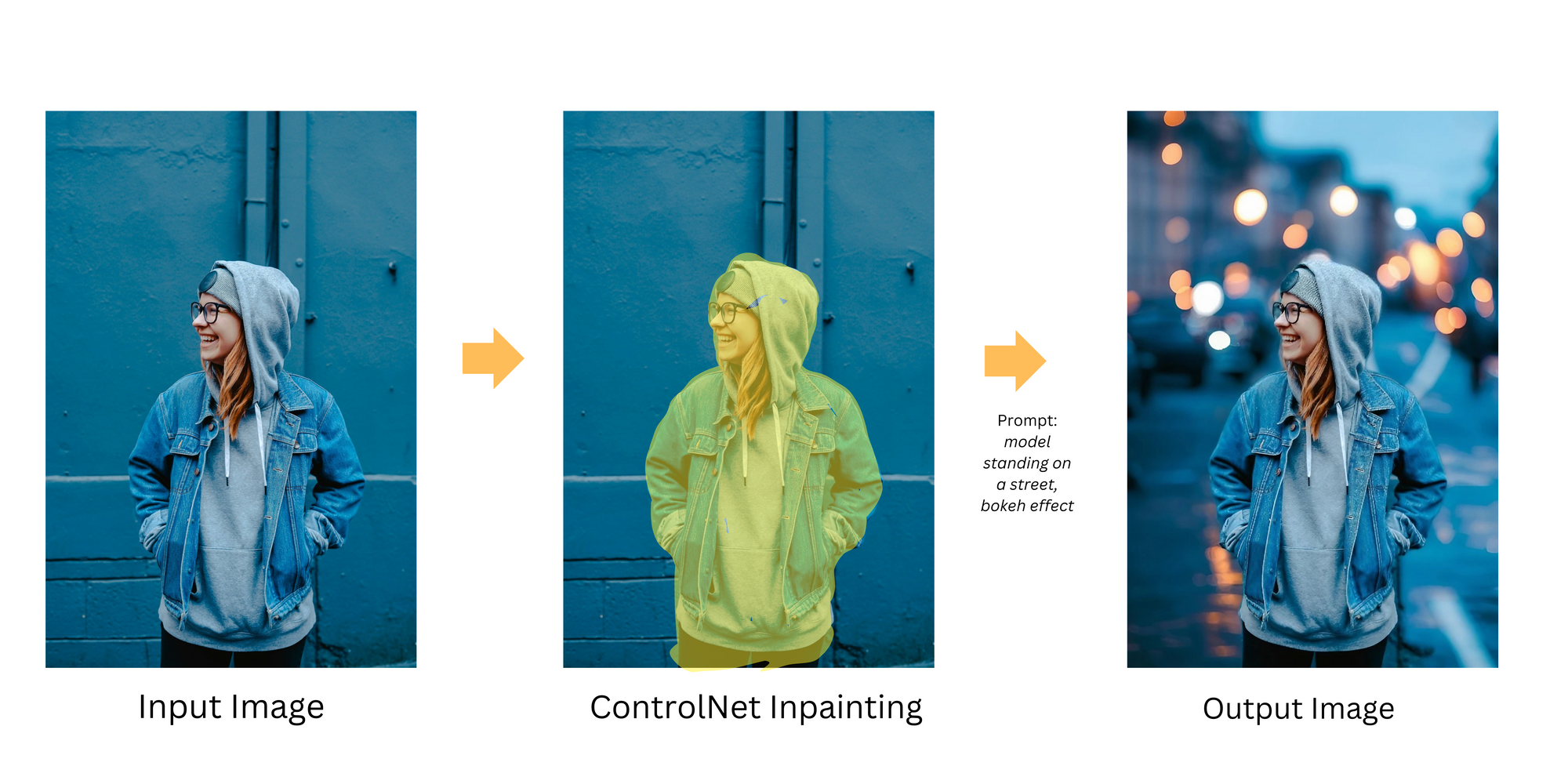

ControlNet Inpainting operates by employing a mask to guide the inpainting process. With this technique, you can specify which parts of the image to retain or remove. An inpaint mask is created around the subject, effectively separating it from the background. ControlNet utilizes this inpaint mask to generate the final image, altering the background according to the provided text prompt, all while ensuring the subject remains consistent with the original image. ControlNet achieves this by incorporating additional conditions, such as control images (e.g., depth maps, canny edges, or human poses), to influence the image generation process.

As demonstrated in the example below, we transformed a rather dull and uninteresting background in a photograph of a model into a vibrant setting with streetlights and a pleasing bokeh effect.

With just a single photograph featuring a model in a hoodie and a jacket, we can create images with a wide range of backgrounds or settings, all achieved by replacing backgrounds with ControlNet Inpainting.

ControlNet Inpainting significantly reduces the need for on-location shoots, offering a substantial saving in both time and expenses. It unlocks a realm of creative possibilities, allowing photographers to experiment with an endless variety of backgrounds without being limited by physical locations. Most importantly, ControlNet Inpainting meticulously alters only the background while ensuring the subject of the photograph remains pristine and unaffected. This not only maintains the photograph's realism but also enhances its overall aesthetic appeal, making it an invaluable tool for photographers seeking to expand their creative horizons while streamlining their workflow.

Changing Clothes using Stable Diffusion Inpainting

Consider another scenario: you are a photographer conducting a photoshoot with a model. In each photograph, the model is expected to change into a different set of clothes. This process can be time-consuming and exhausting for both the photographer and the model, not to mention the countless number of outfits the model has to carry.

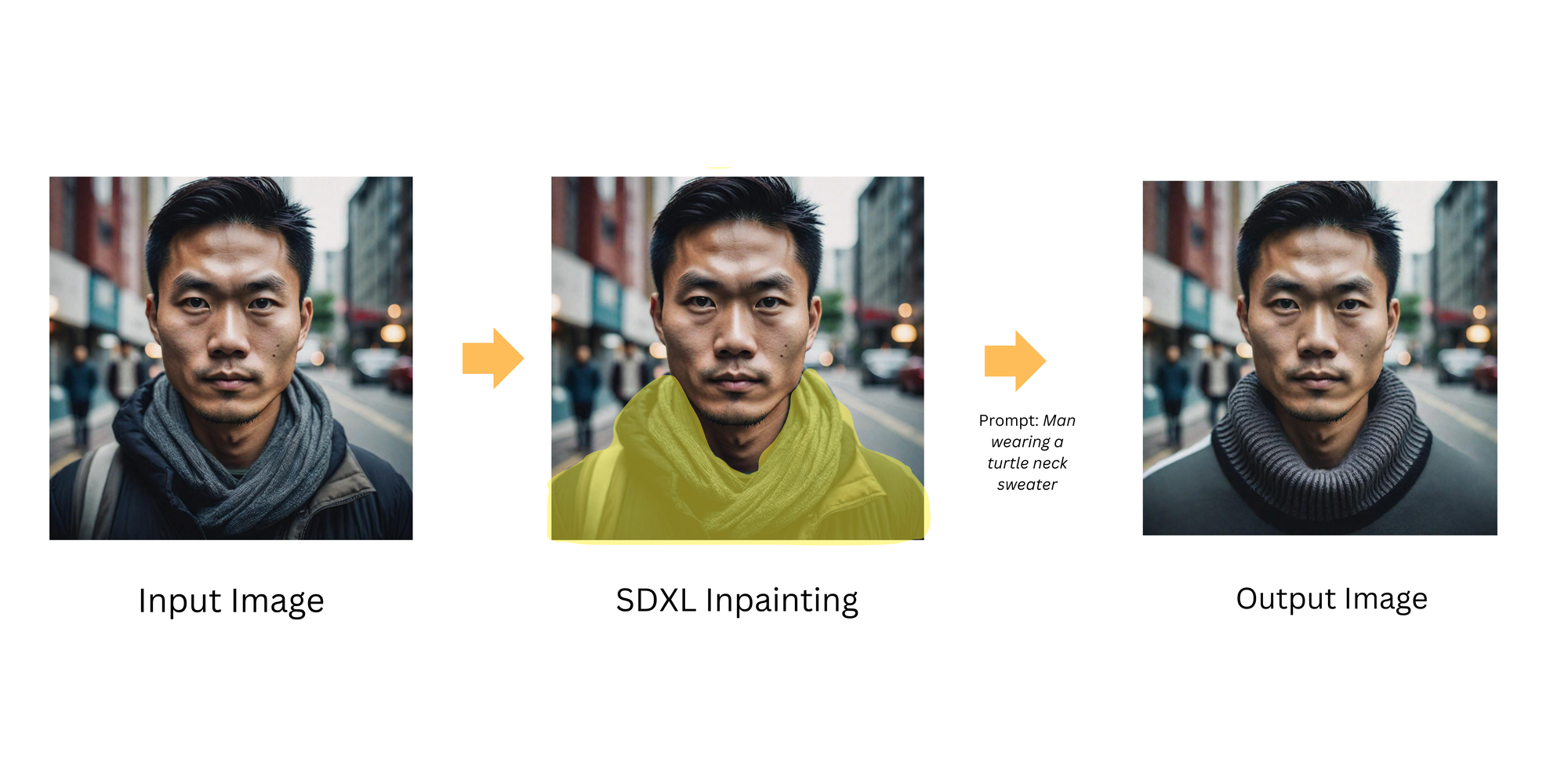

SDXL inpainting transforms images through a concise, four-step process: it begins with the input image, which serves as the canvas for alterations. Next, a mask is applied to highlight specific areas for change. A text prompt then guides the inpainting model, describing the desired modifications. Finally, the model processes these inputs, generating an output image that seamlessly blends the new elements, dictated by the mask and text prompt, with the original image

As illustrated in the example below, we transformed an image of a model wearing a scarf and jacket into one where the model dons a turtleneck sweater.

With just a single photograph of a model in a scarf and jacket, we can create a wide range of images, each featuring the same model in different outfits, all achieved using SDXL Inpainting.

SDXL Inpainting streamlines the outfit-changing process, eliminating the physical necessity for models to change clothes, which not only speeds up the photoshoot but also reduces the fatigue typically associated with frequent wardrobe changes. It opens the door to a myriad of versatile wardrobe options, allowing photographers to offer a broad spectrum of clothing choices without the logistical demands of a physical wardrobe. Additionally, the SDXL Inpainting process ensures that the swapped clothing appears seamlessly integrated with the original image, preserving its realism and natural appearance.

Conclusion

As illustrated in our examples, the possibilities are boundless. With just a single photograph, you can embark on a visual journey, transforming scenes and outfits with unparalleled ease. The result? A photography experience that is not only more efficient and cost-effective but also brimming with limitless creative potential.