Introducing Segmind APIs

Introducing Segmind APIs. Developers can now integrate open-source generative models into their apps and products through our serverless API. All the APIs are powered by voltaML's optimizations under-the-hood to give you the fastest inference times for these models.

Developers can now integrate open-source generative models into their apps and products through our serverless API.

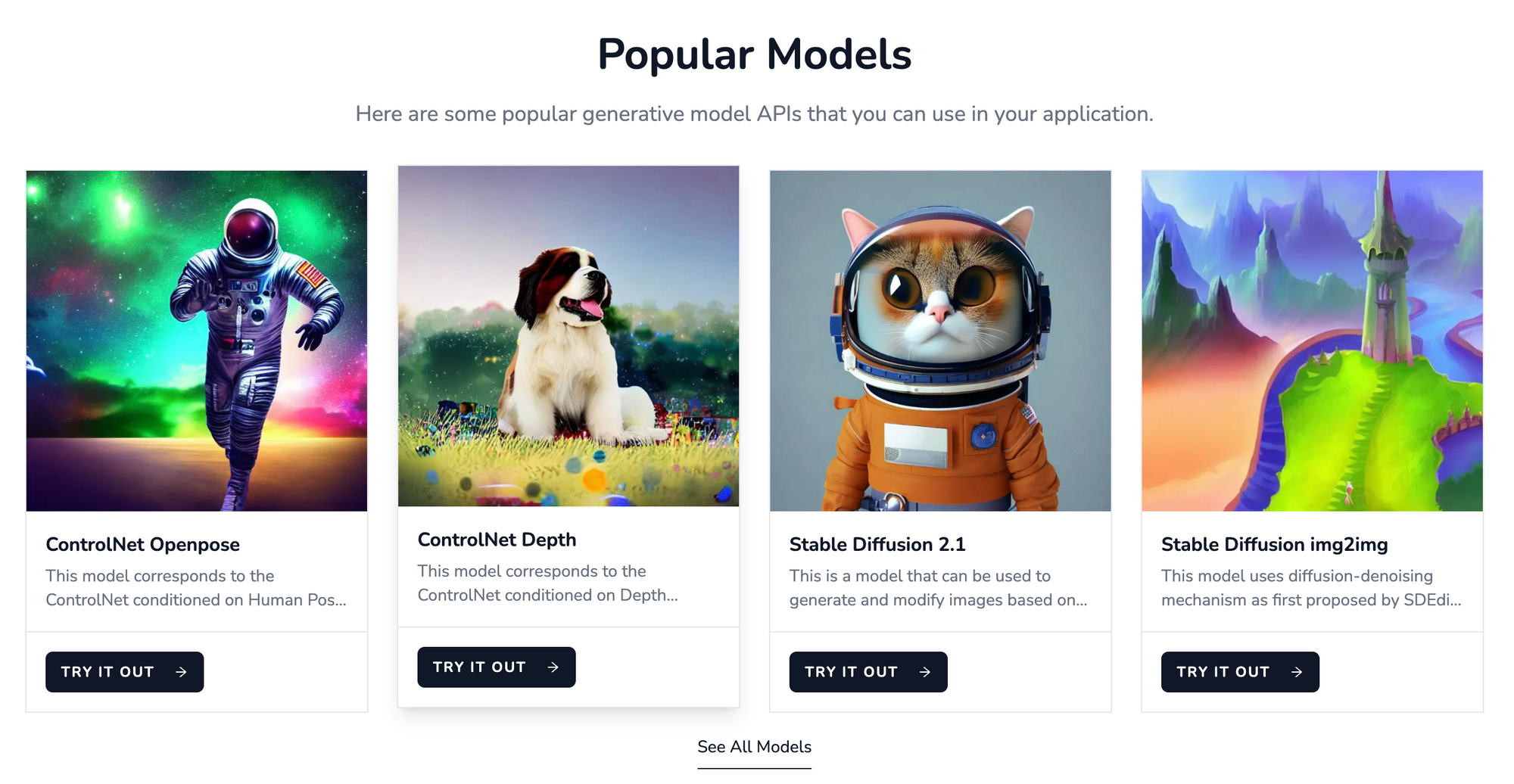

We are excited to announce the launch of the Segmind Serverless APIs, a fast and easy way to run machine learning models using APIs without having to set up any infrastructure. Developers can now use open-source generative models via APIs with much faster and more cost-effective results.

All the APIs are powered by voltaML's optimizations under-the-hood to give you the fastest inference times for these models. We have added Stable Diffusion and ControlNet models and plan to add newer generative models over the coming weeks. Check out our documentation page to know more about the exact models available.

Try out the APIs for free by signing up on our website. Developers get 100 API calls per day for free to get started.

Serverless API

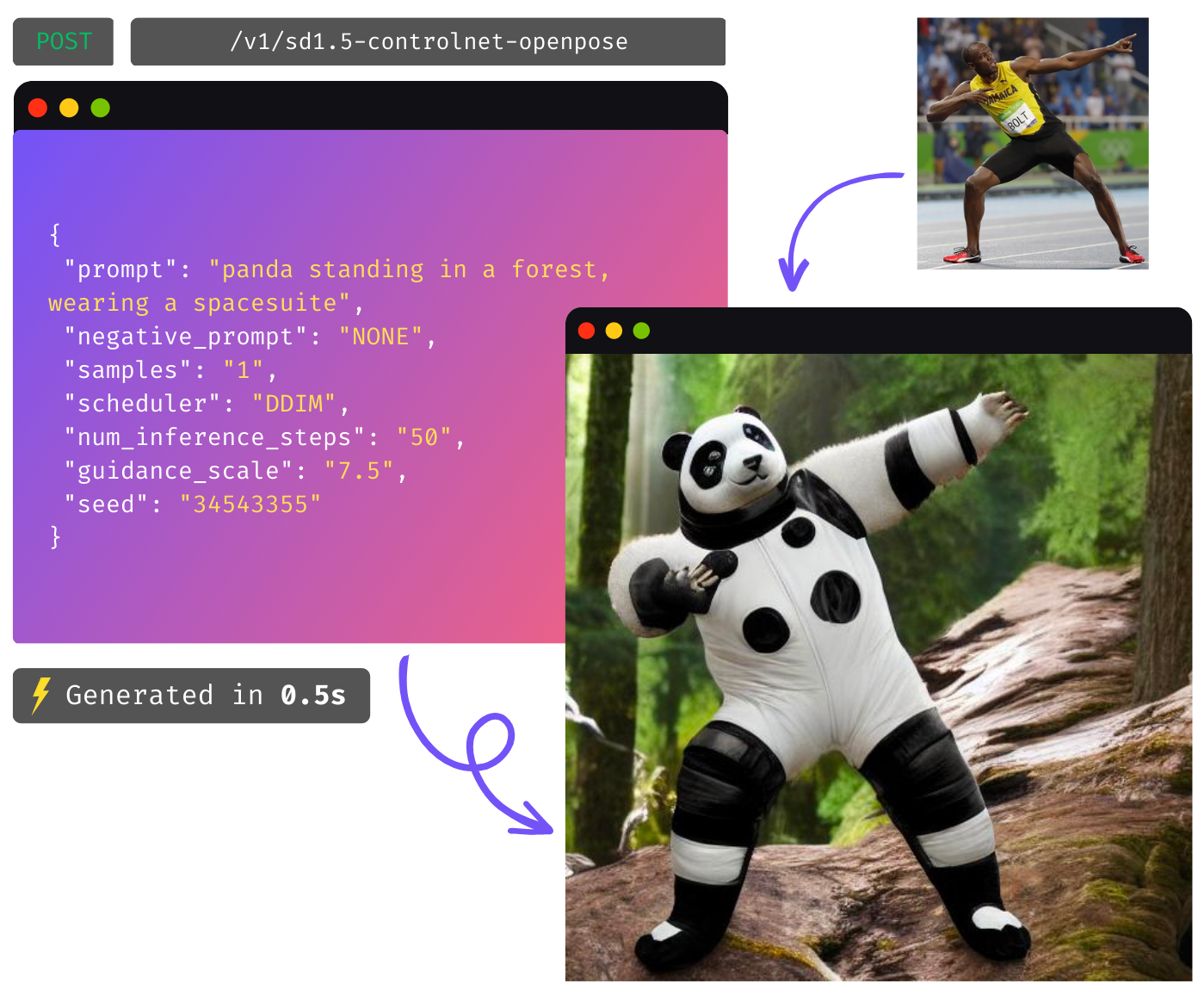

You can use a simple POST API request for an inference. For example, to use Stable Diffusion 2.1 txt2img model, use the following API body.

#Prompt to render, eg. "Stormtrooper giving a lecture"

prompt: str

#Prompts to exclude, eg. "bad anatomy, bad hands, missing fingers"

#Default: None

negative_prompt: str

#Number of output images

#Default: 1

samples: int

#Type of scheduler.

Options: ["DDIM", "DPM Multi", "DPM Single", "Euler a", "Euler", "Heun", "DPM2 a Karras", "DPM2 Karras", "LMS", "PNDM", "DDPM", "UniPC"]

#Default: UniPC

scheduler: enum

#Number of denoising steps.

#Default: 20; Max: 50

num_inference_steps: int

#Scale for classifier-free guidance

#Default: 7.5

guidance_scale: float

#Seed for image generation.

#Default: Random

seed: int

#Image resolution. Options are 512 x 512 or 768 x 768.

#Options: ["512","768"]

#Default: 512

shape: int

You can send a POST request to https://api.segmind.com/v1/sd2.1-txt2img

You can try popular models using the link below.

Future work

We plan to increase the models we support to help apps build with generative features built in. Newer versions of image models including the newer versions for Stable Diffusion and ControlNet are in the pipeline. You will also see an option to choose a dedicated capacity for deeper control over the model's performance and security.