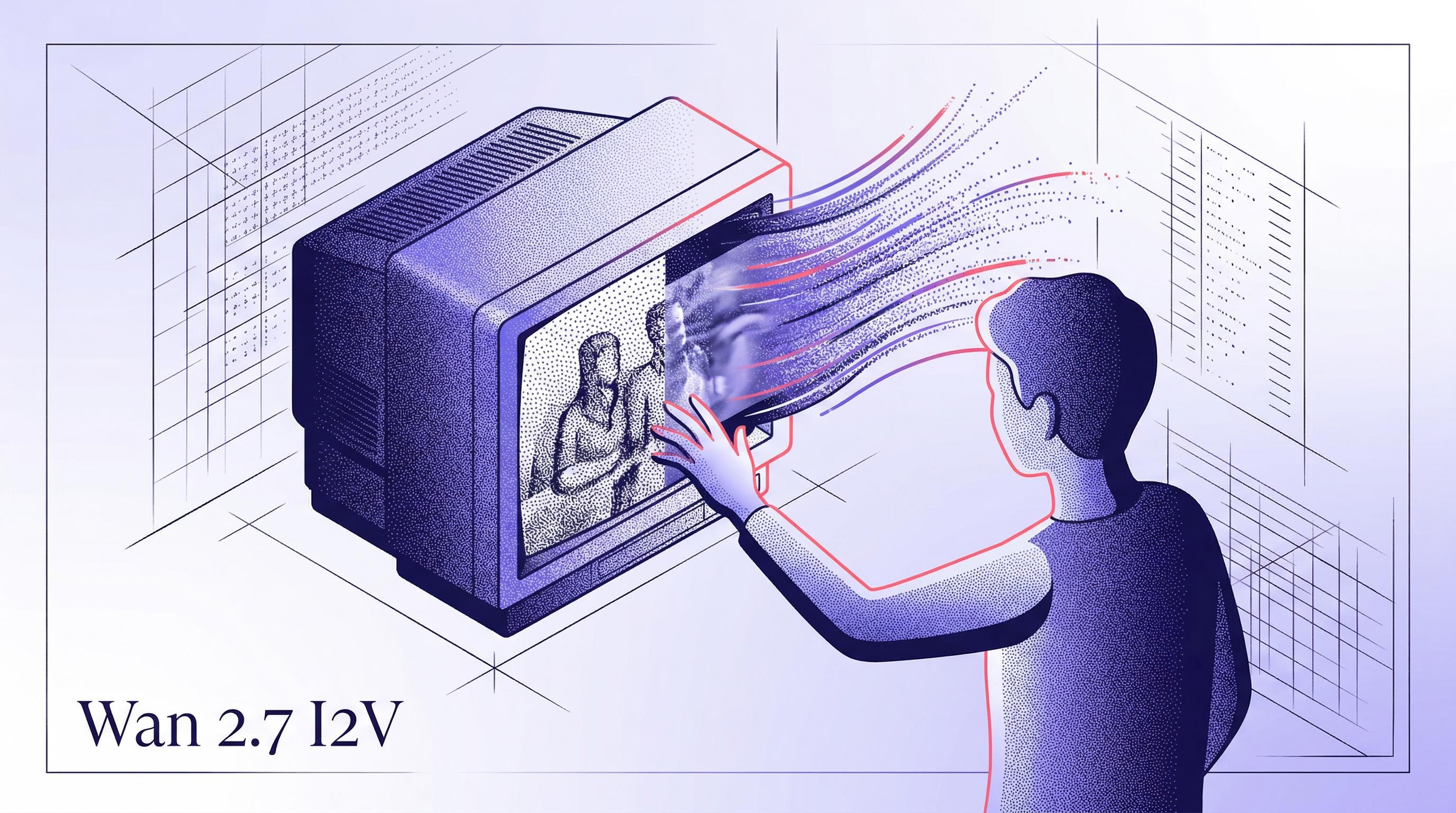

Wan 2.7 Image to Video is Now on Segmind: Animate Any Image in Seconds

Wan 2.7 I2V is now on Segmind. Turn any still image into a cinematic video clip up to 1080P via API. Product reveals, social ads, film B-roll — starting at $0.625.

Demand for AI image to video API tools has exploded in 2026. Search interest for "image to video AI" is holding steady near peak levels globally, and every team I talk to — from indie marketing agencies to post-production studios — is trying to figure out how to produce more video content without hiring more people or blowing their production budget. Wan 2.7 Image to Video is one of the most capable models I've tested for this, and it's now available on Segmind.

What is Wan 2.7 Image to Video?

Wan 2.7 I2V is a state-of-the-art image-to-video generation model from Alibaba's research team. You feed it a still image and a text prompt describing the motion you want, and it produces a fully animated video clip of up to 15 seconds at up to 1080P resolution. What makes it stand out is the combination of cinematic motion quality, first/last frame control (so you can guide exactly where the animation starts and ends), and optional audio sync so characters can move in rhythm with a provided audio track. I ran 7 test cases across marketing, film, and content production use cases, and the motion quality held up across all of them.

What you can build with it

- Marketing agencies: Take a product shot or lifestyle image and turn it into a polished ad clip in under 5 minutes. No studio. No shoot day. I animated a cosmetics product shot with a dramatic particle reveal and a lifestyle model clip with natural head movement and golden-hour lighting, both from a single image.

- Film studios and VFX teams: Use it for pre-visualization and concept reels. I generated a cinematic mountain pan with atmospheric fog and a character close-up with emotional micro-expression movement, both at the quality level you'd expect from a professional grade B-roll pipeline.

- Production houses and MCNs: Automate the B-roll and filler content that eats up hours of editor time every week. Feed it a still from your shoot and get 5 seconds of usable motion content per generation, at $0.625 per clip.

See it in action

Here's one of the test outputs I generated — a marketing product reveal animation, starting from a single cosmetics product flat-lay image:

Wan 2.7 I2V output — cosmetics product reveal, starting from a single still image. 720P, 5 seconds.

Get started

The API is as simple as it gets. Pass an image URL, a motion prompt, and your resolution preference, and you get back a binary MP4. No setup, no infrastructure, no GPU provisioning. Here's the minimal call:

import requests

response = requests.post(

"https://api.segmind.com/v1/wan2.7-i2v",

headers={"x-api-key": "YOUR_API_KEY"},

json={

"image": "https://your-image-url.jpg",

"prompt": "The product rotates slowly, catching golden light, cinematic reveal",

"resolution": "720P",

"duration": 5

}

)

with open("output.mp4", "wb") as f:

f.write(response.content)

Pricing starts at $0.625 per 720P generation and $0.9375 for 1080P. You can try it now at the playground or integrate directly via API: segmind.com/models/wan2.7-i2v