AI Image Generation API: Wan 2.7 Image Pro Review, Real-World Use Cases 2026

Wan 2.7 Image Pro API review: real-world tests for marketing agencies, film studios, and MCNs. Native 4K, chain-of-thought reasoning, full code examples.

Search interest in "best AI image generation" tools hit consistent highs through Q1 2026, and the tools worth tracking aren't the ones making the loudest noise — they're the ones quietly shipping serious capability. Wan 2.7 Image Pro is one of those. I've been running it through real-world tests across three industries and the results are worth writing up in detail.

The core problem this solves: developers and creative teams need production-quality image generation without the infrastructure overhead of running their own models, and without paying enterprise pricing for simple API access. If you're building a product, an internal tool, or a content pipeline, you want something you can call with a few lines of code and get something genuinely usable back. That's what this post is about.

I'll cover what the model is, walk through real use cases for marketing agencies, film studios, and production houses, share the actual API calls I ran, and give you an honest read on where it excels and where it has limits.

What is Wan 2.7 Image Pro?

Wan 2.7 Image Pro is the latest release from Wan's image generation research, now available via Segmind's AI image generation API. What makes it technically distinct from most text-to-image models is chain-of-thought reasoning at inference time. Most image models generate by sampling — they compute a probability distribution over pixel space and sample from it. Wan 2.7 Pro reasons through the prompt first: it considers scene composition, lighting logic, spatial relationships, and text rendering before committing to a generation pass.

In practice, this means prompts that would trip up other models — complex scenes, detailed product shots, multilingual text — tend to come out cleaner. The model supports native output up to 4K resolution, takes optional reference images for consistency control, and handles multilingual prompts natively. At $0.037 per generation, it sits in a competitive range for the quality tier it's operating at.

Key Capabilities

4K native output. Most image APIs downsample and upscale. Wan 2.7 Pro generates at 1K, 2K, or 4K natively — the detail structure is fundamentally different from an upscaled 512px image. For use cases where image fidelity matters (product photography, film concept art, print assets), this matters a lot.

Chain-of-thought composition. The model reasons through scene logic before generating. In my tests this showed up most clearly in complex prompts — multi-subject scenes, detailed environments, and technically demanding compositions came out correctly structured without needing extensive prompt engineering.

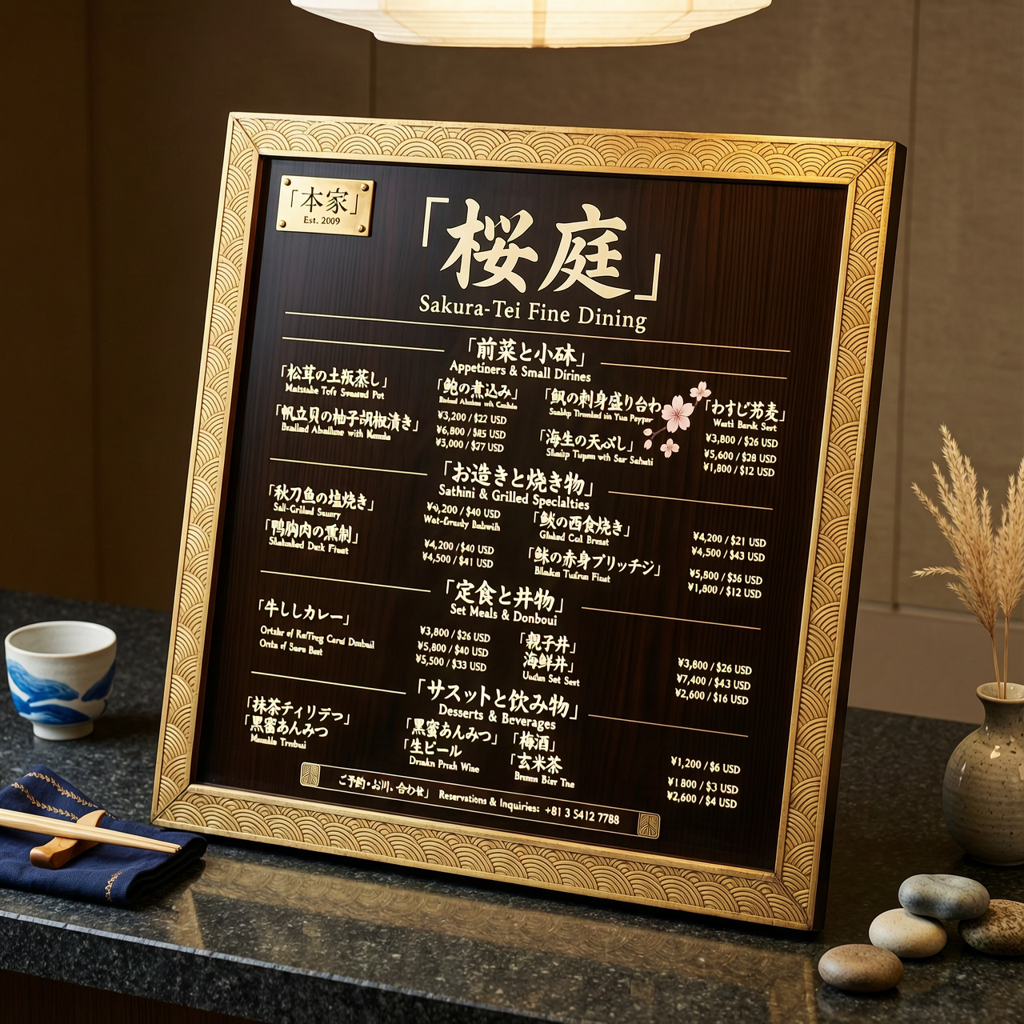

Multilingual text rendering. One of the hardest problems in image generation is rendering legible text. Wan 2.7 Pro handles it. I tested a Japanese/English restaurant menu prompt and got legible characters in both languages — a test most models fail completely.

Multi-reference consistency control. You can pass a reference image to anchor style, character, or object consistency across generations. Useful for building production pipelines where you need visual coherence across a set of images.

Synchronous API, no polling. The API returns a JSON response with image URLs directly — no webhook, no polling loop. Simpler to integrate, especially for batch processing workflows.

Multilingual text rendering: legible English and Japanese characters in a single prompt.

Use Case 1: Marketing Agencies

Marketing teams keep running into the same wall: creative throughput. An agency producing content for 10 clients needs hundreds of visuals a month — product shots, campaign imagery, ad variants. Traditional production is too slow and too expensive to scale. Stock libraries don't capture brand specificity. The "best AI image generation" tools that agencies actually use need to produce commercial-quality output that holds up when a client reviews it.

I tested two marketing scenarios with Wan 2.7 Pro. First, a luxury product ad — a perfume bottle on marble with rose petals and golden lighting, targeting commercial photography quality. Second, a high-fashion editorial — model in a black coat, urban setting, side lighting. Both came back clean. The product shot in particular had the kind of studio lighting logic that usually requires significant prompt engineering to get right.

The workflow for an agency building on this is straightforward: maintain a prompt template library per client/brand, swap product and scene descriptors, generate variants at 2K, run a batch job overnight. A brand that previously needed 2 days of photoshoot scheduling for 20 product images can get those in an afternoon.

import requests

def generate_product_visual(prompt, api_key):

response = requests.post(

"https://api.segmind.com/v1/wan2.7-image-pro",

headers={"x-api-key": api_key},

json={

"messages": [

{

"role": "user",

"content": [{"type": "text", "text": prompt}]

}

],

"size": "2K"

}

)

return response.json()["choices"][0]["message"]["content"][0]["image"]

# Generate luxury product shot

url = generate_product_visual(

"A luxurious perfume bottle on marble, rose petals, golden studio lighting, "

"commercial advertisement quality, photorealistic",

"YOUR_API_KEY"

)

Product Ad Visual

Fashion Campaign

Left: luxury product ad. Right: fashion editorial. Both generated with Wan 2.7 Image Pro via Segmind.

What makes this better than alternatives for agency use: the 4K native output means you're not fighting upscaling artifacts when a client wants to print or use at large format. And the chain-of-thought composition means the first-pass output is closer to usable — less iteration, fewer regenerations, lower overall spend per asset.

Use Case 2: Movie Making and Film Studios

The film production workflow has always had a pre-visualization bottleneck. Concept art takes days. Storyboard revisions eat into pre-production schedules. VFX teams need reference imagery to scope shots before a single frame of the actual film gets shot. AI image generation tools that can produce cinematic-quality concept art on demand are genuinely useful here — and the bar for "useful" in a film context is higher than most other industries.

I ran two film-focused tests: a sci-fi alien desert landscape (the kind of establishing shot that would cost a full day with a matte painter) and a fantasy enchanted forest (detailed environment, specific lighting, multiple compositional elements). The alien landscape at 4K came back with the kind of wide-angle composition logic you'd expect from an experienced concept artist — the astronaut silhouette, the dual sunset, the scale relationship all landed correctly without me needing to specify them explicitly.

import requests

def generate_concept_art(scene_description, api_key):

response = requests.post(

"https://api.segmind.com/v1/wan2.7-image-pro",

headers={"x-api-key": api_key},

json={

"messages": [

{

"role": "user",

"content": [{"type": "text", "text": scene_description}]

}

],

"size": "4K" # native 4K for film-quality output

}

)

return response.json()["choices"][0]["message"]["content"][0]["image"]

concept = generate_concept_art(

"A vast alien desert landscape at twilight, lone astronaut silhouetted against "

"two suns on the horizon, cinematic widescreen, film production quality, photorealistic",

"YOUR_API_KEY"

)

Sci-Fi Concept Art

Fantasy Environment

Film concept art at 4K: sci-fi landscape and fantasy forest environment, generated via Segmind.

For a film team, the ROI case is clear. A concept artist billing $800/day can produce maybe 3-5 solid concepts. With Wan 2.7 Pro at 4K via the Segmind API, a director or producer can generate 50 scene variants in an afternoon, identify the 5 worth developing further, and hand those to the concept artist as starting references. That's a workflow change, not just a cost change.

Use Case 3: Production Houses and MCNs

Multi-channel networks and production houses face a volume problem that most image generation discussions underestimate. An MCN managing 200 YouTube channels needs thumbnails, banner art, promotional graphics, and B-roll imagery — continuously, at scale, in formats that match each channel's visual brand. The rising query data I pulled from Google Trends shows increasing search volume for "AI image generation tools" from this segment through early 2026, which tracks what I hear from people running these operations.

I tested a content creator studio setup prompt — the kind of workspace imagery that channels use for thumbnails, sponsor integrations, and brand materials. The output captured the aspirational-productive aesthetic that performs well in this space: ring lights, dual monitors, clean desk setup, colored LED accents. These are exactly the kinds of assets that a production team can standardize into a prompt template and run as a batch job.

import requests, concurrent.futures

def generate_thumbnail_asset(channel_brief, api_key):

response = requests.post(

"https://api.segmind.com/v1/wan2.7-image-pro",

headers={"x-api-key": api_key},

json={

"messages": [

{

"role": "user",

"content": [{"type": "text", "text": channel_brief}]

}

],

"size": "2K"

}

)

return response.json()["choices"][0]["message"]["content"][0]["image"]

# Generate assets for multiple channels in parallel

channel_briefs = [

"Modern YouTube studio, ring lights, gaming setup, RGB lighting, energetic",

"Minimalist podcast studio, professional microphone, neutral tones, calm",

"Outdoor content creator setup, natural lighting, adventure gear, dynamic"

]

api_key = "YOUR_API_KEY"

with concurrent.futures.ThreadPoolExecutor(max_workers=3) as executor:

futures = [executor.submit(generate_thumbnail_asset, b, api_key) for b in channel_briefs]

urls = [f.result() for f in futures]

Content creator studio asset for MCN use — single API call, ready for thumbnail or brand use.

Time savings at scale: if a production team is generating 500 channel assets per month, and each used to take a designer 30 minutes, that's 250 hours of design time. At scale with the Segmind API, the same volume runs as a batch job. The economics shift entirely.

Developer Integration Guide

The full working API call in Python — this is all you need to get started with Wan 2.7 Image Pro via Segmind:

import requests

API_KEY = "YOUR_SEGMIND_API_KEY"

response = requests.post(

"https://api.segmind.com/v1/wan2.7-image-pro",

headers={"x-api-key": API_KEY},

json={

"messages": [

{

"role": "user",

"content": [

{"type": "text", "text": "Your detailed prompt here"}

]

}

],

"size": "2K", # "1K", "2K", or "4K"

"seed": 42 # optional, for reproducible results

}

)

data = response.json()

image_url = data["choices"][0]["message"]["content"][0]["image"]

print(f"Generated: {image_url}")

Three parameters worth knowing: size controls output resolution (1K is fastest, 4K is highest quality), seed lets you pin a specific generation for reproducibility across a pipeline, and the optional image field accepts a reference image URL for style or subject consistency. For batch processing, the synchronous response pattern means you can use concurrent.futures.ThreadPoolExecutor to run parallel calls without building polling infrastructure. Full docs at segmind.com/models/wan2.7-image-pro.

Honest Assessment

What it does very well: compositional intelligence. Complex multi-element scenes that would require extensive prompt engineering with other models tend to come back structured correctly. The chain-of-thought approach shows up most clearly here. It also handles product photography prompts exceptionally well — the lighting logic and surface rendering for commercial photography use cases is noticeably better than what I see from comparable models.

Where it has room to grow: highly stylized or non-photorealistic outputs. The model is clearly optimized for photorealistic generation, and while it can do stylized work, it's not where it shines. If your use case is anime-style illustration or abstract art, there are more targeted options. The API also doesn't currently expose fine-grained style parameters (like CFG scale or sampler choice) that advanced users sometimes want for precise control.

Best fit: commercial photography replacement, cinematic concept art, product visuals, content thumbnails, reference imagery for creative pipelines. Not a fit: illustration styles, fine-art generation, use cases requiring extreme artistic stylization.

Frequently Asked Questions

What is Wan 2.7 Image Pro used for?

It's a text-to-image model optimized for photorealistic image generation. Best uses include commercial product photography, film concept art, marketing visuals, and content creation assets — anything where you need high-quality output at scale via API.

How do I use the Wan 2.7 Image Pro API?

Call https://api.segmind.com/v1/wan2.7-image-pro with your Segmind API key and a messages array containing your prompt. The response is JSON with an image URL. See the Developer Integration section above for the full code example.

What is the best AI image generation tool for marketing agencies in 2026?

It depends on volume and output requirements. For agencies needing commercial-grade product and campaign imagery at API scale, Wan 2.7 Image Pro via Segmind is worth testing — native 4K output and strong compositional quality make it suitable for client-facing work.

Is Wan 2.7 Image Pro free to use?

Not free, but affordable. Each generation costs $0.037. Segmind provides credits on signup for testing. For production use, the per-image cost is competitive relative to the output quality tier.

How does Wan 2.7 Image Pro compare to other AI image generation tools?

The main differentiator is chain-of-thought reasoning at inference time, which improves compositional accuracy on complex prompts. Native 4K output also distinguishes it from models that generate at lower resolution and upscale. For pure speed or stylized output, alternatives may suit better.

Can Wan 2.7 Image Pro be used for film production?

Yes. I ran multiple film-style prompts (sci-fi landscapes, fantasy environments) at 4K and the results are suitable for pre-visualization, storyboarding reference, and concept art. The model handles cinematic composition well.

Conclusion

Wan 2.7 Image Pro covers three high-value use cases well: commercial visual production for marketing agencies, concept art and pre-visualization for film teams, and scaled asset generation for content production houses. The chain-of-thought reasoning makes it noticeably more reliable on complex prompts, and native 4K output means the results are production-usable without additional processing.

Try it now at segmind.com/models/wan2.7-image-pro — available via API with no setup required.